Domain-driven design (DDD) has been around for quite a long time. In short, DDD focuses on domain to match domain requirements. One of the pillars of DDD is bounded context. A bounded context is a strategic approach to dealing with large teams with significant domain areas to cover. Bounded context separates one domain from another. It helps to shape teams and responsibilities. Besides, it ensures clear and well-defined contracts between application domains.

The microservice architecture establishes itself upon bounded contexts to ensure that services properly align with domains. Many companies depend on bounded context to define service boundaries. In software, this is how we scale: we make ownership explicit, and we make interfaces explicit.

Then we walk into analytics, and we quietly abandon both. We ship “tables” and “pipelines” instead of interfaces. We ship “datasets” without a promise. And the predictable result is a familiar question that never dies: Do we trust this number?

It was a matter of time for bounded context to arrive at the data engineering scene. Zhamak Dehghani brought it with her famous article. She popularized the idea of treating data as a product. In her original article, she describes it like this:

For a distributed data platform to be successful, domain data teams must apply product thinking with similar rigor to the datasets that they provide; considering their data assets as their products and the rest of the organization's data scientists, ML and data engineers as their customers.

Zhamak Dehghani

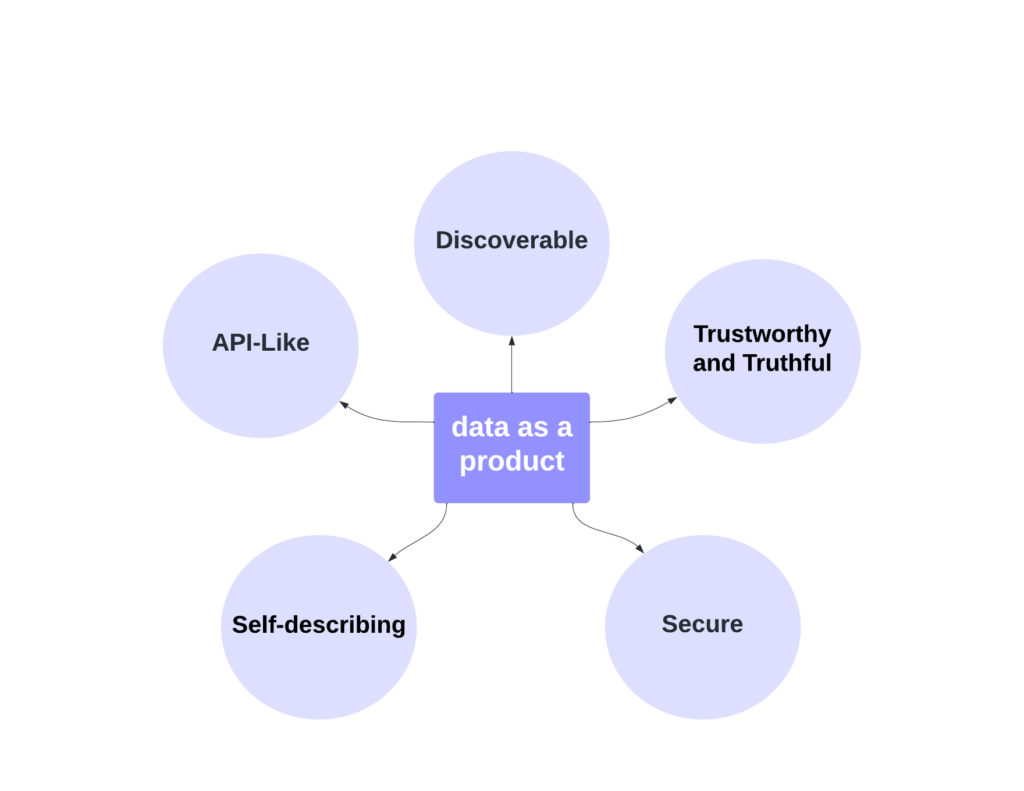

Zhamak Dehghani described features of data as products as discoverable, trustworthy, and secure. We will go over all these features but let’s take a step back. Both microservices and data as products come from the same routes. Shouldn’t they share some? I think they do.

Microservices provide an API to talk to the service. A data product needs an equivalent interface, one that tells consumers not only what the fields are, but what the data means, how fresh it is, how it is secured, and what breaks when it changes. What’s an API for data as a product? It’s more than the table schema. The schema is the beginning, not the promise.

I believe all properties for data as a product closely align with microservices. In the end, we want to provide self-serving APIs. You don’t need to nudge a team to get more information about the API. It’s all there. That is the bar. Before we list properties, we should admit what actually breaks data platforms. Not missing tools. Missing promises. So first, a contract, an owner, and a lifecycle. Then we can talk about properties.

Data as a Product Properties

Data as a Product Properties

The Data Product Contract

In every engineering team, you see the same pattern. New pipelines, new catalogs, new dashboards. And still, the same questions keep coming back. How did we arrive at this number? Can we trust it? These questions are not supposed to exist in a healthy system. They show up when nobody owns the meaning, nobody owns the freshness, and everyone is pretending a table is a product.

In software, we learned this lesson the hard way. A service without a contract turns into a support nightmare. A data set without a contract does the same thing, just quieter. It fails in meetings, not in logs. So here is the idea. A data product is not a table. It is a promise. And like every promise, it needs a contract.

Shape: Schema and Versioning

This is the part everyone thinks is the whole story. Columns, types, partitions. Fine.

But a schema is only useful if you treat it like a contract, not like “whatever the pipeline spits out today.” Because the moment a consumer builds on it, it stops being your internal detail. It becomes their dependency.

So the real question is not “what are the columns.” The real question is “what changes are allowed without breaking people.” Additive changes are usually fine. Renames, type changes, meaning changes, those are not refactors. Those are breaking changes. If consumers learn about them through a broken dashboard at 9am, you have failed your promise.

Hence, version it. Deprecate it. Give people a path. This is boring. This is also what makes it work.

Meaning: Semantics and Definitions

Most data disasters are semantic. Not technical. “Customer.” “Active.” “Revenue.” “Delivered.” These words look harmless until you put them in front of two teams and watch them argue for an hour. Then you realize the data was never the problem. The meaning was.

The semantics layer is you doing the unglamorous thing. Writing down what this data actually represents. What it includes. What it excludes. What time boundary it uses. What edge cases it has. How it should be joined. If there is a “do not use this for X” warning, this is where it belongs.

Without this, every consumer writes their own interpretation. Then you end up with five versions of truth, and everyone is shocked when the CEO asks for one number.

Promise: SLOs for data

Pipelines succeed while the product is unusable. That is one of the most common lies in data.

Freshness is late, but the job is green. Completeness dropped, but nobody noticed. Accuracy drifted, but it still loads. Everything is magically working, yet nobody trusts it. I’ve been there. Done that. I inherited teams that nobody wanted to use their data because nobody knew or trusted what it said. Nobody wanted to depend on them.

This layer is where you define what healthy means. How fresh it should be. How late is broken. What completeness looks like. What checks actually matter. Not vanity checks, checks that correlate with real world reality. Also stability, how often do you change the shape or meaning, and availability, can people query it when they need it.

If you do not define this, consumers will define it for you. And believe me it’s ugly. They will build side tables, side dashboards, side pipelines. Not because they are jerks. Because they are trying to survive and you aren’t delivering what you are supposed to.

Access: Security and Compliance

Access is where good systems go to die. If access is too open, you are one mistake away from a serious incident. If access is too painful, people will copy the data somewhere else and now you have five uncontrolled versions floating around. That is how “governance” turns into theater.

This layer should make access boring. Who can access it? How they get it. How it is audited. Whether it contains sensitive fields. What is masked? What is allowed? What is not. Also, what is the approved consumption path, so people stop inventing their own workarounds?

You want safety and speed. You do not get that by preaching. You get it by making the safe thing the easy thing. This is getting easier everyday thanks to immense amount of tooling.

Support: Ownership and Escalation

This is the layer that makes the rest real. If a data product has no owner, it is not a product. It is a ghost asset. It exists in storage, but socially it does not exist. Nobody trusts it because nobody can answer for it.

So name the owner team. Define the escalation path. Decide how changes are communicated. Decide what the response expectation is when something breaks or when consumers are blocked. This is the difference between “we published data” and “we run a product.”

A lot of teams try to solve data trust with tooling. Catalogs, lineage graphs, fancy dashboards. Those are useful, but they are not the core. The core is ownership plus promises plus clear contracts. Everything else is fancy decoration.

What a Contract Looks Like

If “contract” still feels abstract, that is fair. Most teams talk about it like it is a philosophy.

It is not. It is a file.

Something you can point to when a consumer asks, “what does this mean,” “how fresh is it,” “who owns it,” and “what happens when it changes.” Shape and meaning. Promises and checks. Access and escalation. If it is not written down, people will fill the gaps with guesswork, and guesswork is how trust dies.

# Data Product Contract (example)

# Product: orders.v1

product:

name: orders

version: 1

domain: commerce

owner:

team: commerce-data

slack: "#commerce-data"

oncall: "pagerduty://commerce-data"

description: >

Canonical view of the order lifecycle. One row per order.

Built for finance, growth, ops, and support reporting.

interface:

storage:

kind: warehouse_table

identifier: analytics.orders_v1

partitioning:

type: time

field: order_created_at

granularity: day

schema:

primary_key: order_id

fields:

- name: order_id

type: string

nullable: false

- name: customer_id

type: string

nullable: false

- name: order_created_at

type: timestamp

nullable: false

- name: order_status

type: enum

values: [CREATED, PAID, FULFILLED, CANCELLED, REFUNDED]

nullable: false

- name: gross_amount

type: decimal(12,2)

nullable: false

- name: net_amount

type: decimal(12,2)

nullable: false

semantics:

time_boundary:

timezone: UTC

definition: >

order_created_at is the time the order was created in the ordering service.

definitions:

net_amount: >

gross_amount minus discounts and refunds.

Excludes chargebacks until they are finalized.

fulfilled: >

An order is FULFILLED when shipment_delivered_at is set in the fulfillment system.

includes:

- production orders only

excludes:

- test orders (flagged by is_test=true upstream)

- internal staff orders (customer_type=STAFF)

join_keys:

customers: customer_id

do_not_use_for:

- "real-time fraud decisions (this product is batch-updated)"

promises:

slos:

freshness:

target: "<= 30m"

measurement: "max(now - max(order_created_at))"

compliance: "99% over 7d"

completeness:

target: ">= 99.5%"

measurement: "orders count vs source-of-truth daily"

accuracy:

target: "net_amount within 0.2% of finance ledger daily"

quality_checks:

- name: "no_null_order_id"

type: not_null

field: order_id

- name: "valid_status_values"

type: enum

field: order_status

- name: "no_duplicate_order_id"

type: unique

field: order_id

- name: "net_amount_non_negative"

type: range

field: net_amount

min: 0

security:

classification:

contains_pii: false

contains_payment_data: false

access:

policy: "rbac"

default_role: "analytics_reader"

restricted_fields: []

approved_consumption:

- "warehouse"

- "semantic_layer"

audit:

enabled: true

retention_days: 365

change_management:

compatibility:

additive_fields: allowed

renames: "new_field + deprecate_old_field"

breaking_changes: "publish v2"

deprecation:

notice_period_days: 90

communication:

- "catalog_notice"

- "slack_broadcast:#data-products"

release_notes: "changelog://orders"

observability:

health_signals:

- name: freshness_slo

source: "monitor://orders_freshness"

- name: completeness_slo

source: "monitor://orders_completeness"

- name: quality_checks

source: "dq://orders_suite"

incident_response:

severity_rules:

- if: "freshness breached > 2h"

then: "SEV2"

- if: "accuracy check fails"

then: "SEV2"

consumer_comms: "statuspage://data-products"

support:

escalation_path:

- "slack:#commerce-data"

- "oncall:pagerduty://commerce-data"

response_expectations:

sev2_ack_minutes: 30

sev3_ack_hours: 4

Who Owns What

This is the part people dodge, because it forces the uncomfortable truth. If you want “data as a product,” you need product ownership. Not vibes. Not a shared Slack channel. Real ownership.

Domain teams own the data product

If a dataset represents a domain concept, the domain team owns it. They are the only ones who can answer the questions that actually matter. They own the meaning. They own the edge cases. They own the “what counts and what does not.” They own the changes, and they own the fallout when those changes break consumers.

This does not mean they build everything alone. It means they are accountable for the promise.

The platform team Owns the Paved Road

The platform team which I’ve been part of should not be the owner of domain truth. The platform team owns the system that makes ownership possible without pain. They provide the templates, the tooling, the guardrails, and the boring automation that nobody wants to build in every team.

Things the platform team should own:

- The standard contract format and scaffolding

- Catalog, lineage, and discovery plumbing

- Access workflows, auditing, masking primitives

- Quality and freshness monitoring tools

- Deployment patterns, versioning support, deprecation mechanics

- The golden path that makes the right thing easy

The platform team builds the road. Domain teams drive. Here are concrete examples of that machinery.

Contract scaffolding as code

A new data product should start from a template, not from blank space. The platform provides a generator that creates:

- A contract file (YAML/JSON) with required fields: owner, datasets, semantics, SLOs, access policy, change rules.

- A README skeleton that forces “meaning” to be written, not postponed.

- A standard folder structure for checks, tests, and runbooks.

This turns “write a contract” from a philosophical task into a two minute action.

Automated Governance in CI

Governance becomes real when it can block a deployment. A practical workflow looks like this:

- Every data product has a contract file in Git.

- Every change goes through PR.

- CI validates that the contract still meets minimum standards.

- CI compares the new schema to the previous version and decides whether the change is additive or breaking.

- If it is breaking and the version did not bump, the PR fails.

If you want to be strict, you can require a migration note for any breaking change and a sunset date for deprecated fields.

One concrete CI Example

The platform provides a CI job that runs on every PR:

- Extract the “published schema” from the contract.

- Fetch the previous published schema from the main branch or a schema registry.

- Run a compatibility check.

- Enforce versioning rules.

The output is simple: pass, or fail with a clear message explaining what broke and what to do.

That is the paved road. It is not “please remember the version.” It is “you cannot merge until you do.”

Built in quality and SLO checks

Teams should not reinvent data quality every time. The platform provides a standard library of checks:

- Freshness checks that understand partitions and event time.

- Volume and completeness checks with sane defaults.

- Null spikes, duplicates, outliers, referential integrity.

- Drift checks for key fields.

Teams choose a small set, configure thresholds, and the platform wires it into monitoring. When checks fail, they do not just show up in a dashboard, they feed the product’s health signal.

Default Observability Surfaces

The platform also controls where health appears, so it is consistent:

- Catalog shows: last update, freshness status, SLO compliance, recent incidents.

- A single “product health” page exists per product.

- Alerts route to the owning team, using the ownership field in the contract.

Consumers should not need to learn ten dashboards. One health signal, always in the same place.

Access Workflows

Security is where teams go off road. The paved road is a standard access mechanism:

- RBAC groups tied to data product access policies.

- Self service access requests with audit trail.

- Default masking policies for sensitive columns.

- Approved consumption paths enforced by tooling.

If access takes a two week ticket, I can guarantee you will have shadow copies.

Governance is A Protocol

Most governance becomes a gate. That is why engineers hate it. It’s just a bureaucracy nonsense for all. If federated governance is done right, you run a set of rules that run quietly in the background. Naming conventions, classification rules, minimum metadata, SLO requirements, compatibility checks. The point is not to slow people down. The point is to prevent the same predictable failures.

If governance lives in meetings, it will fail. If it lives in automation, it scales.

The simplest Rule Set that Works

A data product is not allowed to exist unless:

- It has an owner team

- It has a defined meaning, not just a schema

- It has at least one promise, usually freshness

- It has an access policy

- It has a change policy, even if it is basic

Without these, you are shipping artifacts.

The Common Failure Mode

Central teams try to “help” by owning everything. That creates speed at first, then it collapses. The platform team becomes a bottleneck, domain teams disengage, and consumers go back to building shadow pipelines.

The other failure is the opposite. You decentralize ownership but give teams no paved road. Everyone does it differently, metadata rots, and the catalog becomes a graveyard.

The balance is simple. Domain teams own the promise. Platform owns the machinery that makes promises cheap to keep.

Data Lifecycle

So, we are all excited and we want data as product efforts flourish. However, most will fail for one boring reason. They publish something. People start using it. Then business changes. Then, the schema changes. The meaning goes along. Someone does a dirty rename. A dashboard breaks. Who? How? What? Everyone quietly goes back to extracting raw data and building their own copies.

So yes, contracts matter. But the real test is not day one. The real test is day ninety.

Add is Easy, Remove is a Fight

In data or services for that matter, you can always add a column/field. That part is cheap. The expensive part is always removal. The moment consumers depend on something, deletion becomes a breaking change. If you treat deletion as cleanup, you will create a culture where nobody trusts anything you publish.

So the rule is simple. Additive changes are allowed. Destructive changes are scheduled, negotiated and informed. Sometimes, you will be left with that column for reasons you didn’t think before.

Separate Shape Changes from Meaning Changes

Shape changes are visible. You can describe it through your engine. Meaning changes are sneaky. If you change a field name, people notice. If you change what a field means, people do not notice until the numbers are wrong, and by then it is already political.

So you need to treat meaning changes as first-class events. A schema can stay the same while the truth inside it changes, and that is more dangerous than a breaking build. When meaning changes, you need to version them. You write release notes just like a library. You do not “ship quietly and see if anyone complains.”

Versioning is Mercy

You simply need to apply something like semantic versioning. That’s as simple as it’s.

A practical approach:

- V1 stays stable.

- When you must break compatibility, you publish V2 alongside it.

- You keep V1 alive long enough for people to migrate.

- You set a sunset date, and you mean it.

This is not a process for the sake of process. It is what stops your catalog from turning into a bunch of broken promises.

Deprecation Needs a Ritual

Most teams deprecate by writing a line in a doc nobody reads if you are lucky. That is not deprecation. That is wishful thinking. What happens to wishes in this industry? Murphy’s law. Bad things will happen.

Deprecation needs a visible signal. A warning in the catalog. A status in the product metadata. A message to known consumers if you have that telemetry. At minimum, a consistent pattern that makes it hard to miss. Consumers do not hate change. They hate surprises.

The end state

The goal is boring, boring is good and boring is your friend. Consumers should feel like a data product is safe to depend on. Not because it never changes, but because change is predictable, signaled, and survivable.

That is the difference between “we published datasets” and “we built a platform people build on.”

You cannot Trust What You cannot See

Lifecycle is how you change without breaking everyone. Observability is how you stop lying to yourself about what is happening right now. That's why I implemented a data discovery tool because you cannot fix what you can't see.

Most teams measure pipelines. Green jobs. Successful runs. Pretty charts. Then the numbers are wrong anyway, and everyone acts shocked. A pipeline can succeed while the product is late, incomplete, or quietly broken.

So the rule is simple. Stop monitoring jobs. Start monitoring the product.

Measure the product, not the machinery

A data product is healthy or unhealthy. That is what consumers care about. So you track product signals:

- Freshness, how late is the latest partition, how far behind is the stream.

- Completeness, did volume drop in a way that makes no sense.

- Quality, null spikes, duplicates, outliers, broken joins, referential integrity.

- Drift, are key distributions behaving differently than yesterday.

- Contract compliance, did shape or types change, did meaning change without a version.

If those signals are good, the pipeline being messy is annoying but survivable. If those signals are bad, the pipeline being green is irrelevant.

Make Health Visible

If consumers need to ask, is this fresh, then you know the battle is lost. Health needs to sit next to the product. In the catalog entry, in the README, wherever your org actually goes to find data. A simple status. Freshness SLO compliance. Last successful update. Known incidents. If the only place health exists is an internal dashboard that nobody sees, it is not observability. It is private reassurance.

Data Failures are Real

Late data is a failure mode. Wrong data is a failure mode. Incomplete data is a failure mode. Access regressions are a failure mode. Breaking changes are a failure mode.

They should trigger something. An alert to an owner. An escalation path. Communication to consumers. Not a quiet shrug that shows up later as a war over numbers. If a product has a promise, then a broken promise is an incident. That is how trust is built, not with speeches.

One Test to Rule Them All

Can a consumer answer this in under a minute? Is this data product safe to use right now?

If yes, you are building trust. If not, you are building alternative pipelines, whether you admit it or not.

Properties Are Not Goals

Properties are outcomes. If you have a contract, an owner, a lifecycle, and observability, the properties stop being marketing words and start becoming true.

Discoverable

Data is discoverable when consumers can find the product and understand it fast, without asking a person. That happens when the contract is standardized, the metadata is kept alive by the owner, and health is visible where people look. A catalog without ownership becomes a graveyard. A catalog with ownership becomes a map.

Data discovery is the oil to reduce friction to get data insights.

Trustworthy

Trust is not a vibe. It is the ability to answer one question quickly, is this safe to use right now. That is SLOs, checks, incident response, and honest health signals. Green jobs do not create trust. Visible product health does.

Self-describing

Self-describing means the product explains itself. Not through tribal knowledge, through the contract. Shape and meaning, examples, warnings, “do not use for X”, versioning rules. If a consumer needs a meeting to understand a dataset, the dataset is not self-describing. It is social.

Secure

Security is not a separate project. It is part of the product interface. Access rules, classification, masking, audits, approved paths. If secure access is painful, shadow copies will appear. If secure access is predictable, people will actually use the platform.

API-like, self-serve

This is the point I missed before. A data product should feel like an API, even if it is not an HTTP endpoint. An API is a self-serve interface. You should not need to ask a human how to use it, whether it is healthy, or what happens when it changes. That is what the contract, the access path, and the health signal are doing. They are the data product’s API.

If your “data product” cannot be consumed without Slack messages, meetings, and guesswork, then it is not a product. It is a table with a support burden.

Minimum Viable Data Product

Most teams fail here because they start with ambition. Ten products. A full catalog. A governance committee. A platform redesign. Then nothing ships.

Start smaller. Start uglier. Start real.

Step 1: Pick one dataset people already fight over

Do not pick something nobody cares about. Pick the dataset that shows up in board decks, dashboards, and arguments. The one where different teams quietly maintain different versions because they stopped trusting the “official” one.

If there is no pain, there is no adoption.

Step 2: Give it an owner, not the data team

A product without an owner is a ghost. We covered that. Name the team. Put it in the metadata. Make it obvious who is accountable for meaning, changes, and broken promises.

Ownership is the first feature.

Step 3: Write the contract, keep it short

Do not write a novel. One or two pages is enough. Shape, meaning, one or two promises, access, support. If you cannot say what the data means and what it excludes without writing a book, you do not understand it yet, and your consumers definitely do not.

Step 4: Add three checks

Not twenty. Three. One freshness check. One volume or completeness check. One basic quality check that catches obvious breakage. If a check fails, it should be visible next to the product, not buried in someone’s personal dashboard.

This is where you start building trust.

Step 5: Publish it where consumers actually look

Catalog, README, wiki, I do not care. What matters is that a consumer can find it, understand it, get access, and see health without asking a person.

If they still need to send you messages, nothing has changed.

Step 6: Enforce one rule

Additive changes are fine. Breaking changes are versioned and deprecated. Meaning changes are treated as real changes, not quiet edits. If the product breaks its promise, it is an incident, not a shrug.

That is it. One product. One owner. One contract. Three checks. One promise. Repeat.

Everyone understands the ownership problem. The problem is getting someone to take it, without the whole thing turning into a blame magnet. That is where repeat collides with organizational friction.

Navigating Organizational Friction

In a lot of companies, “owner” does not translate to “the person empowered to improve this.” It translates to “the person who will be blamed when something goes wrong.” So domain teams avoid it. They might care about the numbers, they just do not want to inherit the operational pain.

Here is how you move forward without pretending politics does not exist.

Start with Ownership of Meaning

Do not begin by asking a domain team to run pipelines or carry oncall. That is where negotiations die. Begin with the thing only they can own, meaning. A named domain person signs off on definitions, edge cases, inclusion rules, and semantic changes. The platform team can keep running the machinery at first. You are not decentralizing ideology. You are anchoring the truth where it belongs.

Once meaning has an owner, reliability has somewhere to attach.

Make Ownership a Trade

If ownership is framed as “more work,” you will lose that game. If it is framed as less chaos, you will win it. How do you do that?

Domain teams usually accept ownership when they get something back:

- faster turnaround for changes they actually need

- a path to kill duplicates and stop reconciling numbers in meetings

- priority support from the platform team

- fewer one-off requests and spreadsheet audits

Ownership is a Deal

Use a two-owner model, then converge In many orgs, the first workable step is split responsibility:

- Domain owner: meaning, definitions, approval for semantic changes

- Platform owner: pipelines, monitoring, access workflows, operational response

This is not the end state, but it is a bridge that avoids the false choice between “the central team owns everything” and “domain teams suddenly become engineers.” Over time, as the paved road reduces effort, you can move responsibility outward. Start where embarrassment lives Do not try to sell the philosophy. Sell the pain. Pick the metric that causes the most confusion in leadership meetings. Revenue. Active users. Refund rate. Delivery time. Build the first product there.

Make Anonymity Impossible

A huge reason ownership fails is that “nobody” is an allowed state. So do not allow it, at least not in the tier meant for consumption:

- if a dataset has no owner, it cannot be promoted to the consumer tier

- if semantics are not defined, it cannot be labeled trustworthy

- if there is no health signal, it cannot be recommended

This is not punishment. It is hygiene. If the org wants trusted data, it has to pay the small price of accountability.

Reduce the Cost of Saying Yes

Most resistance is rational. If being the owner means endless pings, endless meetings, and endless blame, the correct answer is no. So lower the cost:

- templates so teams do not start from scratch

- automated checks so failures are detected early

- clear escalation paths so the owner is not alone

- a standard deprecation process so change does not become personal

- a single place for health so the owner is not answering the same questions all day

Make Ownership Feel Like Control

The goal is not to win an argument about org design. The goal is to get one real product owned in the real world, then repeat without creating a new bottleneck. Once you have one owned product that people trust, the rest of the company stops debating whether ownership is necessary and starts asking how to get the next one.

Closing Thoughts

If you strip all the vocabulary away, “data as a product” is not complicated. It is ownership, a clear contract, discipline around change, and visibility when reality deviates.

Do that, and the properties follow. Discoverable. Trustworthy. Self-describing. Secure. API-like. Not because you declared them, but because you built the conditions that make them true.

If your org cannot answer three questions quickly, you do not have data products:

- Who owns it?

- What does it mean?

- Is it healthy right now?

Everything else is a theater that people have fun watching but no real outcome. If the org will not allow ownership, that is fine, just be honest about what you are shipping, artifacts, not products

Good Reads

Book: Domain-Driven Design: Tackling Complexity in the Heart of Software

Article: BoundedContext

Article: How to Move Beyond a Monolithic Data Lake to a Distributed Data Mesh

Book: Designing Big Data Platforms: How to Use, Deploy, and Maintain Big Data Systems