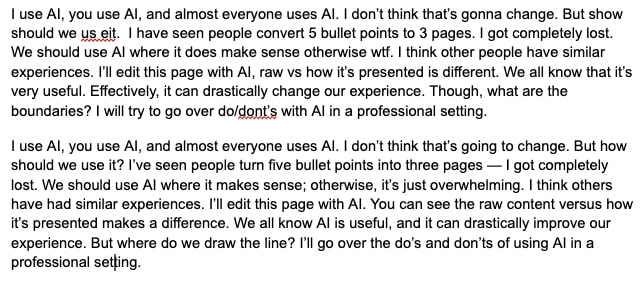

I use AI, you use AI, and almost everyone uses AI. I don’t think that’s going to change. But how should we use it? I’ve seen people turn five bullet points into three pages — I got completely lost. We should use AI where it makes sense; otherwise, it’s just overwhelming. I think others have had similar experiences. I’ll edit this post with AI. You can see the raw content versus how it’s presented makes a difference. We all know AI is useful, and it can drastically improve our experience.

So, where do we draw the line?

AI can drastically improve our workflow, but mindless overuse creates new problems instead of solving old ones. I will try to talk about AI's integration into our workflows, the challenges it presents, the current landscape of its use, and the potential pitfalls we need to navigate.

AI edited introduction

AI edited introduction

AI is Their Default for Newcomers

Now, we have a new workforce entering the professional world. They met AI recently but they have never known life without AI in a professional setting. This will become de facto for any newcomer. Asking an AI assistant to summarize an email thread or draft a response is becoming as natural as Googling something.

For the new generation, using AI is and will be their second nature. Just as previous generations turned to Google as their default source of information, today’s newcomers instinctively rely on AI assistants for tasks that were once manual. That’s okay. Nevertheless, we still need them to make decisions and come up with opinions. You can’t just offload all these to AI as they aren’t really doing that good. They lack context and don’t often have the critical thinking ability. Where do we then stand with the use of AI?

AI isn’t the Answer For Everything

Let’s acknowledge one thing first. You can do a lot with AI but you can’t just outsource everything. When you overuse these tools, it generally leads to bloated, robotic and almost bullshit messages that lose meaning. People hate that. I do. I don’t want to look at a 3 paragraphs message where it should be really 3 simple sentences. There are many ways they create more problems than solutions.

Take communication threads. An AI generated summary can be a lifesaver when you’re stuck in an endless thread. Email or slack conversation. No one wants to scroll through 20 back-and-forth convo just to figure out what’s happening. AI can pull out the key points in seconds, turning noise into something useful.

But context matters. AI doesn’t always catch the details that actually shape a conversation. It might be a technical matter or an important decision. If you trust it blindly, you miss things. And suddenly, you’re the person misrepresenting a discussion because AI skipped a crucial nuance.

Same thing with AI generated writing. AI can fix grammar, tweak tone, and structure your message. By the way, that’s literally how it’s used in this blog. But should it iron out everything so much that it kills personality? Not really. Sometimes it overcorrects. If I want to call something bullshit, it’s bullshit. I don’t want to change that. Generally speaking, an over polished AI message feels like they’re written for a machine, not a person.

Take data analysis. AI can identify patterns and flag anomalies, but should it be the one making business decisions? No. Because AI lacks judgment. It doesn’t know if a metric is off because of an actual issue or just a one-off statistical anomaly. A human still needs to step in, analyze the bigger picture, and decide what to do next.

AI is Just Another Tool

I trash talked about AI enough at this point, let’s take a look at what we can use it for. It can process data, find patterns, and speed up decision making. But here’s the reality: AI isn’t here to think for you. If you’re expecting AI to replace human judgment, you’re setting yourself up for failure.

A lot of people assume AI will take over their jobs. But honestly? AI isn’t replacing smart, adaptable people. It’s replacing repetitive tasks that nobody wants to do. I really want AI to suggest many things and I’ll look at it and you know. Approve! That’s why I like the idea of AI agents. They find things that can be automated, present it, and then you just make the decision. Voila!

AI agents are basically smart assistants that don’t just follow commands but proactively analyze your work, identify patterns, and suggest automation opportunities. Instead of setting up automations manually, you just review what they find and give the go ahead.

AI as an Enhancement, Not a Replacement

If AI helps you summarize a massive document, great. If it saves you from rewriting the same email five times, even better. But if you’re using it to dodge critical thinking, communication, or decision-making, that’s where things start to fall apart.

Here’s the simple rule:

- AI should assist, not replace.

- AI should enhance, not dictate.

- AI should accelerate, not bypass human thought.

The people who succeed in the AI-driven workplace won’t be the ones who offload everything to AI. They’ll be the ones who know when to use AI as a tool and when to trust their own expertise. Life has a compounding effect. It’ll be more amplified with AI. So, I think the key here is building expertise. If you’re already an expert in an area, AI can be a force multiplier but you need to get that expertise in the first place.

AI will replace Some People

AI isn’t coming for all jobs, but it is coming for people who rely on predictable workflows. If your job is mostly about following patterns, debugging routine issues, or looking up documentation, AI is already learning to do it faster. Businesses just want efficiency. They won’t wait for AI to be perfect. It's the employer's market. They're laying off workers as soon as AI is good enough. AI doesn’t need to replace engineers, just make companies need fewer of them. The same logic applies everywhere. If AI can shrink your team from ten to three, that’s seven people gone. If everyone gets more efficient, there’s less need to hire, and some people will be out of a job.

The ones who survive won’t be the ones doing what AI does slightly better. They’ll be the ones doing what AI can’t. Real-world problem-solving. Critical thinking. Navigating messy, unpredictable situations. I think the worst-hit will be those who assume AI is far from replacing them. People need to adapt to a new reality. Use AI as a force multiplier instead of fighting it. With that being said, there are a few pitfalls in using AI.

Navigating the Pitfalls

In my opinion, the first step is recognizing these pitfalls, and the second is learning how to navigate them. Hence, here are a few pitfalls I’ve identified and what we can do about them. I’m pretty sure there are others, feel free to ping me about them. Happy to edit my post with them.

1. Security

There’s the obvious copy/paste things to AI. It’s very possible for someone to unintentionally leak internal documentation or communication. Even without explicit PII, sensitive operational info can slip into public models. So, you don’t want to do that. Hence, use company-approved tools. While using an AI assistant, assess whether the details that you provide involve critical information. In the end, it’s your judgment call.

2. Nuances

Because assistants lack decision-making context, they create seemingly accurate but contextually incorrect summaries. Always verify AI generated summaries or outputs, especially around technical decisions and their rationale.

3. Skill Atrophy

This is a big problem for people who come to the business setting new. They might over rely on LLMs for coding or debugging. You want to be able to do those yourself. If you don’t, it can diminish critical thinking skills. If AI consistently provides solutions without challenge, people risk losing their edge. Use AI to assist, not replace. Regularly solve problems independently to keep your skills sharp.

4. Authenticity

You know yourself, you can quickly see and identify AI generated text, images, or videos. They often feel formulaic. It lacks the human touch. I use it once in a while but I really have to describe what I want for image generation. It generally lacks branding, marketing, and creative writing. It all sounds too generic. I think it's fine to use AI to draft ideas, but you need to refine and personalize content to ensure authenticity and emotional resonance.

5. Decision-Making

AI can analyze trends and suggest actions, but it lacks intuition, ethical reasoning, and situational awareness. If you rely too much on it, you are taking a risk of making dehumanized, tone-deaf, or outright flawed decisions. We should treat AI as an advisor but avoid making it a decision-maker. Human oversight is critical for ethical and nuanced decision-making.

6. Bias

AI models are inherently biased because of their training data. It often reinforces stereotypes or produces skewed results. So be mindful about that. You’re biasing towards what the model is trained on. Hence, recognize that AI is only as good as its data. We need to always question biases and ensure diverse perspectives.

7. Edge Cases

AI models work well with common patterns. Nevertheless, as you have probably tested out, it struggles with niche, edge case problems. If people overly depend on AI, they easily may miss out on cases in complex scenarios. That’s why I think it’s good to leverage AI for common tasks, but manually tackle edge cases to maintain expertise and robustness.

Next Steps

AI is here. It's everywhere, and it's not going away. As an engineering leader, I see my role as not just adopting new tech but guiding my team on using it smartly. AI can transform chaos into clarity, saving us time. It can boost our productivity. When it’s misused, it creates confusion, bloated communication, and strips away the authenticity we all crave. If you or your team over rely on AI, it makes you lazy and hinders innovation. In my humble opinion, real innovation still demands human judgment, intuition, and critical thinking.

My position is simple: AI is a powerful assistant, it is not a substitute. It should amplify your skills, not diminish them. People who succeed won't be those who delegate everything to AI; they'll be those who intelligently integrate AI into their workflows.